| .github | ||

| parsers | ||

| screenshots | ||

| static/styles | ||

| templates | ||

| .gitignore | ||

| app.py | ||

| LICENSE | ||

| README.md | ||

| requirements.txt | ||

This program parses recipes from common websites and displays them using plain-old HTML.

You can use it here: https://www.plainoldrecipe.com/

Screenshots

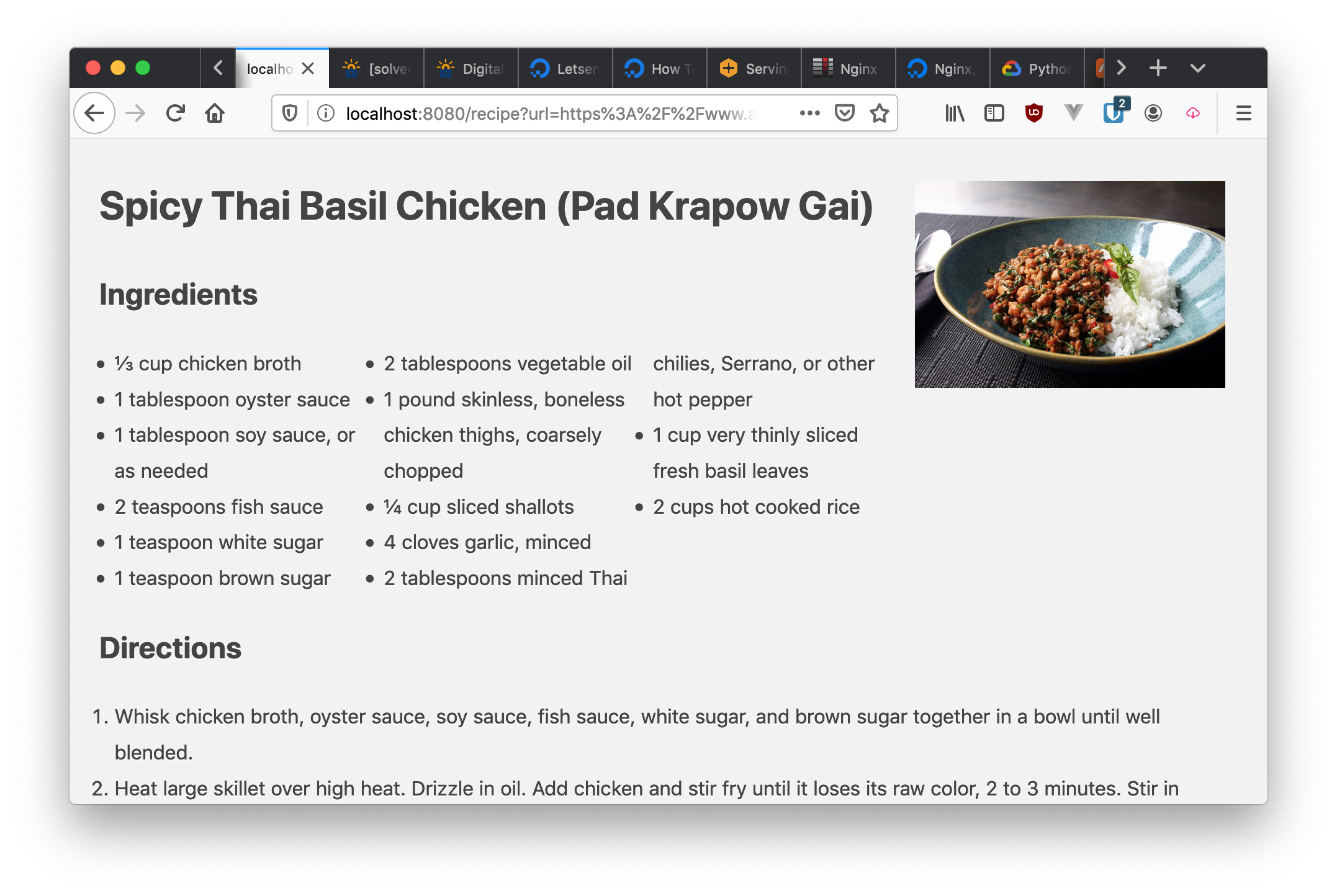

View the recipe in your browser:

If you print the recipe, shows with minimal formatting:

Building Locally

- Install Python 3.6 or newer

git clone https://github.com/poundifdef/plainoldrecipe.gitor download the source and extractcd plainoldrecipe- Create a virtual environment (optional)

pip install -r requirements.txtpython main.py

If all goes well you should see something along the lines of:

python main.py

* Serving Flask app "main" (lazy loading)

* Environment: production

WARNING: This is a development server. Do not use it in a production deployment.

Use a production WSGI server instead.

* Debug mode: on

* Restarting with stat

* Debugger is active!

* Debugger PIN: 123-456-789

* Running on http://localhost:8080/ (Press CTRL+C to quit)

After which simply navigate to the appropriate URL as displayed on the last line.

Deploy

Run deploy.sh

Acknowledgements

Contributing

-

If you want to add a new scraper, please feel free to make a PR. Your diff should have exactly two files:

parsers/__init__.pyand add a new class in theparsers/directory. Here is an example of what your commit might look like. -

If you want to fix a bug in an existing scraper, please feel free to do so, and include an example URL which you aim to fix. Your PR should modify exactly one file, which is the corresponding module in the

parsers/directory. -

If you want to make any other modification or refactor: please create an issue and ask prior to making your PR. Of course, you are welcome to fork, modify, and distribute this code with your changes in accordance with the LICENSE.

-

I don't guarantee that I will keep this repo up to date, or that I will respond in any sort of timely fashion! Your best bet for any change is to keep PRs small and focused on the minimum changeset to add your scraper :)

Testing PRs Locally

git fetch origin pull/ID/head:BRANCHNAME